Computational Methods in Optimization

David Gleich

Purdue University

Spring 2026

Course number CS-52000

Tuesday, Thursday 9:00-10:15

Location Forney B124

Course project

The course project is worth 30% of your final grade.

It will involve a statement of choice on

- March 30th based on original typo. (No extension)

and then weekly reports on

- April 3th

- April 10th

- April 17th

The final project is due May 1st.

There is no late work accepted for the project.

The statement of choice due on March 30th is worth a smaller number of points but will result in a zero on the entire final project if you don't turn it in on time.

The weekly status reports should be 2-3 pages long and discuss the work you have done that week on the project. They are equally weighted and worth 1/3rd of the project grade in total (i.e. 10% of your final grade). This makes them worth about "one homework" homework.

The final project should be 15-20 pages, single spaced, in a readable typeset manuscript. It should describe what you did, why you did it, and what your results mean or say about your ideas. I like to think of a course report like this as equivalent to "1/3rd" of a research paper. The difference is that you should describe everything you did in the course project, whereas you'd only describe the successful ideas in a paper.

You may collaborate with one, and only one, other person on this project and submit a joint writeup. You must identify this collaborator in your selection of the project on March 30th. You may not share code, ideas, or discuss with any other project group outside of your partner. (Note that collaboration is encouraged -- there is no difference between grading collaborative projects and individual projects.)

LLM usage. You must pick between LLM-free project (you and your standard resources alone! No LLMs! Very simple code completion would be allowable) and LLM-using project. If you pick LLM-using, the standards and expectations are higher. I expect shorter but more dense writeups that explicitly detail and investigate the ideas. Using an LLM makes it "easier" to experiment, but harder to understand, thus, your grading will be based on how much understanding you communicate in your writeups. LLMs like throwing words at problems, most of which are irrelevant. You will likely need to edit them heavily for full points. LLM slop (irrelevant or extraneous text), will result in losing points. My goal is to engage with your ideas and understanding, not some statistical language model. I would strongly suggest writing things yourself based on the LLM summaries.

Note that Project 3 needs to be LLM-free. The point is to learn how to do this.

There are three choices for the project.

Project 1: Low-rank factorizations of the matrix-multiplication tensor

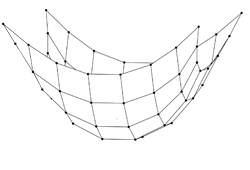

Let be -by- and be -by-. Let be the result , that is, standard matrix multiplication. Let be the vectorization of a matrix into a vector column-by-column. For examples It turns out there is a tensor called the matrix multiplication tensor that allows one to compute from the vectors and using the method Thus, in it's simplest form, the matrix multiplication tensor is a -by--by- tensor enables one to compute the result of an matrix matrix multiplication. If the tensor has a rank factorization, then there is an algorithm to compute a matrix-matrix product using only multiplication operations.

When (i.e. matrix multiplication), then the standard algorithm to multiply and has 8 multiplications. However, there exists a rank-7 factorization of this tensor for this problem. Consequently, there is an algorithm using only 7 multiplication operations. This line of reasoning gives rise to the matrix multiplication algorithm known as Strassen's method. In short, while it seems not so useful to do matrix multiplication with only 7 multiplication operations, we can view any larger matrix as a set of blocks and then recursively use a block algorithm. This ideas give the operation count for Strassen's method as .

The project here is to investigate algorithms for computer tensor factorizations of matrix multiplication tensors. This has been an active research area recently with results from Google's AlphaEvolve and independent researchers.

More specifically, the project is given a matrix multiplication tensor find a rank factorization of the form The goal is to make as small as possible such that you get zero error in an approximation of the form

Possible project approaches:

1) Focus on large scale tensors (i.e. large values of )

2) Focus on alternative objective functions (i.e. beyond least squares fitting)

3) Try and turn it into a sequence of related problems

4) Focus on reproducing known factorizations

5) Look at what happens with constrained factorizations.

Your goal is to say SOMETHING about the project. That is, you must go beyond just using an optimization software package to minimize the objective (although, that's "good enough" for a B.)

To get a B, you must minimize the least squares fitting function with a gradient based optimization scheme where you have worked out the gradient by hand and verified your gradient is correct.

Project 2: Faster Grokking for training a transformer

It's best to watch this video on grokking if you are interested in this project.

The study of grokking was done with modular arithmetic. The goal of this study is to train your own transformer model or similar DNN that groks modular arithmetic as was done in the original. But to do so, you won't use stochastic gradient descent. The goal is to train something as fast as possible by using tools from this class -- including explicit regularization. (i.e. directly minimizing a loss function with a weighted norm penalty function).

To get a B, you must use one of the algorithms from class, such as a quasi-Newton method, to "grok" a model to correctly evaluate a modular arithmetic algorithm. You may use automatic-differentiation. You must report the time your algorithm takes compared with standard stochastic gradient descent to "grok" the same way.

To get an A, you must go beyond and investigate another dimension of this problem:

- large input spaces (e.g. in thousands), this may require scaffolding / curriculum training

- studying regularization, implicit and explicit. Does grokking require regularization?

- model diversity -- what models can grok and which ones can't?

Project 3: An interior point LP solver

Throughout the class, we've stressed (when possible) some of the details that make real optimization software run. In this project, you'll be responsible for implemeting the most robust interior point LP solver you can.

You should read about interior point solvers in Primal-dual interior-point methods by Stephen J. Wright, SIAM 1997.

You must do this in Julia. (If you don't think this will be appropriate, let me know and we can discuss alternatives, but you must do so by April 10th.)

The interface I will evaluate is:

using MatrixDepot, SparseArrays

mutable struct IplpSolution

x::Vector{Float64} # the solution vector

flag::Bool # a true/false flag indicating convergence or not

cs::Vector{Float64} # the objective vector in standard form

As::SparseMatrixCSC{Float64} # the constraint matrix in standard form

bs::Vector{Float64} # the right hand side (b) in standard form

xs::Vector{Float64} # the solution in standard form

lam::Vector{Float64} # the solution lambda in standard form

s::Vector{Float64} # the solution s in standard form

end

mutable struct IplpProblem

c::Vector{Float64}

A::SparseMatrixCSC{Float64}

b::Vector{Float64}

lo::Vector{Float64}

hi::Vector{Float64}

end

function convert_matrixdepot(P::MatrixDepot.MatrixDescriptor)

# key_base = sort(collect(keys(mmmeta)))[1]

return IplpProblem(

vec(P.c), P.A, vec(P.b), vec(P.lo), vec(P.hi))

end

"""

soln = iplp(Problem,tol) solves the linear program:

minimize c'*x where Ax = b and lo <= x <= hi

where the variables are stored in the following struct:

Problem.A

Problem.c

Problem.b

Problem.lo

Problem.hi

and the IplpSolution contains fields

[x,flag,cs,As,bs,xs,lam,s]

which are interpreted as

a flag indicating whether or not the

solution succeeded (flag = true => success and flag = false => failure),

along with the solution for the problem converted to standard form (xs):

minimize cs'*xs where As*xs = bs and 0 <= xs

and the associated Lagrange multipliers (lam, s).

This solves the problem up to

the duality measure (xs'*s)/n <= tol and the normalized residual

norm([As'*lam + s - cs; As*xs - bs; xs.*s])/norm([bs;cs]) <= tol

and fails if this takes more than maxit iterations.

"""

function iplp(Problem, tol; maxit=100)

end

There is less flexibility here, but two focuses are:

1) Focus on robustness (solve all the problems)

2) Focus on speed (don't solve everything, but be very fast when you do solve it.)

The test problems all come from the University of Flordia Sparse Matrix repository: https://sparse.tamu.edu/LPnetlib

- 'afiro','brandy','fit1d','adlittle','agg','ganges','stocfor1', '25fv47', 'chemcom'

These matrices can be downloaded using the MatrixDepot.jl package

Specific requirements

Your code must be written in the spirit of reproducible research.

The final evaluation will take into account my ability to download

and run your code. Also, I will evaluate it on additional

problems from the LPnetlib collection and compare the solutions

against the Clp simplex code.

You may not use LLMs on this project.

Project X: Your own research

These projects are discouraged, but if there is a natural fit between your research and the objectives of the class, you may propose a project. In your proposal (to me), you must detail what you plan to do, how it compares with Project 1 and 2.

No collaboration will be allowed on projects on your own research.